BOOT: Hello Systems!

1. ENTRY_POINT

making.systems will mainly consist of deep dives into the lowest levels of the computing stack. My goal is simple: to prove that developers can and should understand every level of abstraction they rely on.

We are currently living in an era where fundamental, structural reasoning is increasingly being outsourced to opaque, statistical models hosted in clouds we don’t control. I want to help you reclaim that understanding.

2. THE_ABSTRACTION_TRAP

Modern software is becoming a brittle tower of AI-generated dependencies, built on top of platforms we no longer bother to understand.

My concern isn’t about whether current AI systems are “smart” or “stupid”, but rather how our over-reliance on them erodes human technical literacy. Here are a few foundational premises that drive my skepticism toward treating AI as a replacement for human engineering:

2.1 BELIEF_01: THE IMITATION ASYMPTOTE

Current LLM capabilities are largely the result of massive pre-training loops. Essentially, finding complex statistical connections between concepts that human experts have already discovered and documented.

We are rapidly approaching the “Data Wall”. As the supply of high-quality human text depletes, AI development is hitting a horizontal asymptote on the S-curve of imitation-based training. While the industry is attempting to pivot to synthetic data, the scaling laws for that remain unproven. When the models run out of human records to ingest, human experts will be strictly necessary to verify new, ground-truth knowledge and push the frontier forward.

2.2 BELIEF_02: THE NECESSITY OF CAUSAL REASONING

Following the logic of the data wall, true domain experts aren’t going anywhere. In fact, their value will likely compound.

If AI systems must transition from simply recalling information to attempting novel problem-solving, we will desperately need engineers who retain strict logical and causal reasoning capabilities. The developers who refused to outsource their foundational understanding will be the only ones equipped to audit, correct, and architect around these systems.

2.3 BELIEF_03: THE UNCERTAINTY OF ALIGNMENT

Furthermore, if we assume that AI intelligence does bypass the data wall and achieves explosive, exponential growth through unmapped reinforcement learning, alignment becomes a massive variable. Predictably constraining the emergent behaviors of a system far more complex than its creators sounds very dubious.

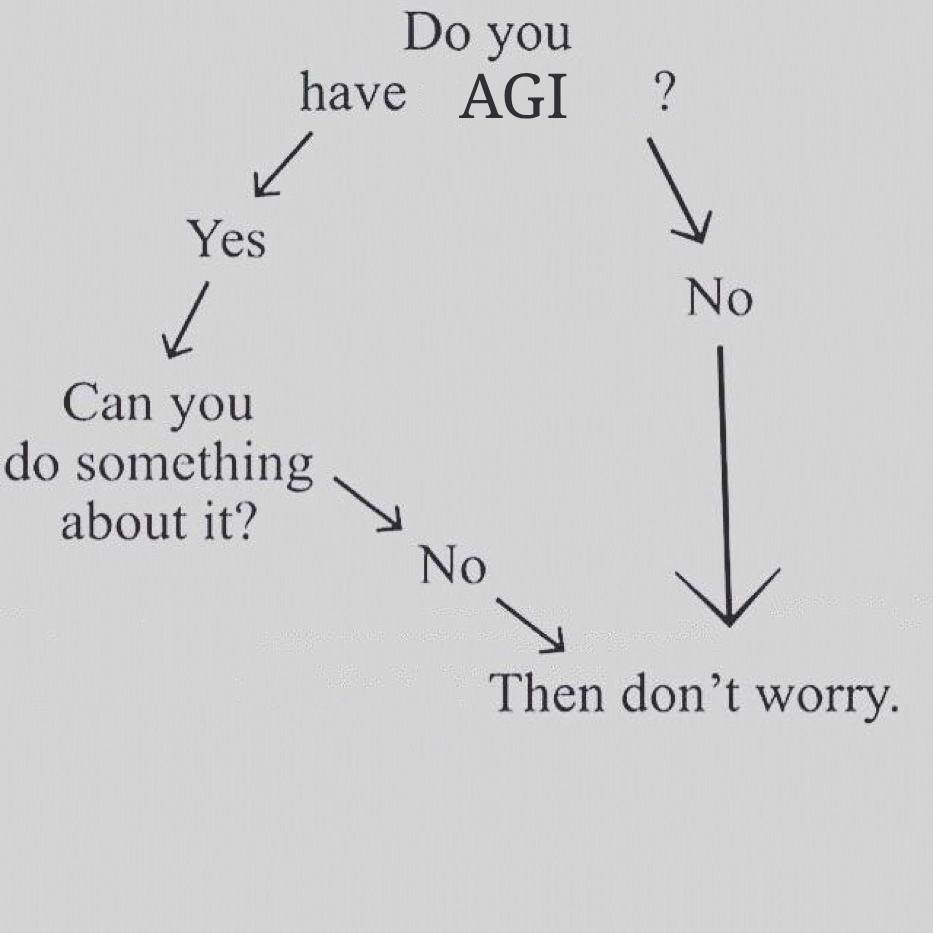

In fact, I believe that if we ever do reach that self-reinforcing AGI learning phase, there is basically nothing humanity can do. We’ll become pure observers of our own fate. This sounds scary, but it’s actually not!

Just follow this graph when it comes to AI becoming superintelligent!

CONCLUSION: Know your stack. Be the expert both humans and AI can rely on.